What Makes a Good Test?

Table of Contents

At a large insurer, we had a checkout-style flow in an internal policy app that looked green in CI for three straight days while the actual save operation was failing in production-like environments. The test passed because it only checked that a success banner appeared. The API call behind the button was returning a 500, the record never persisted, and our “good” test was happily asserting on a lie.

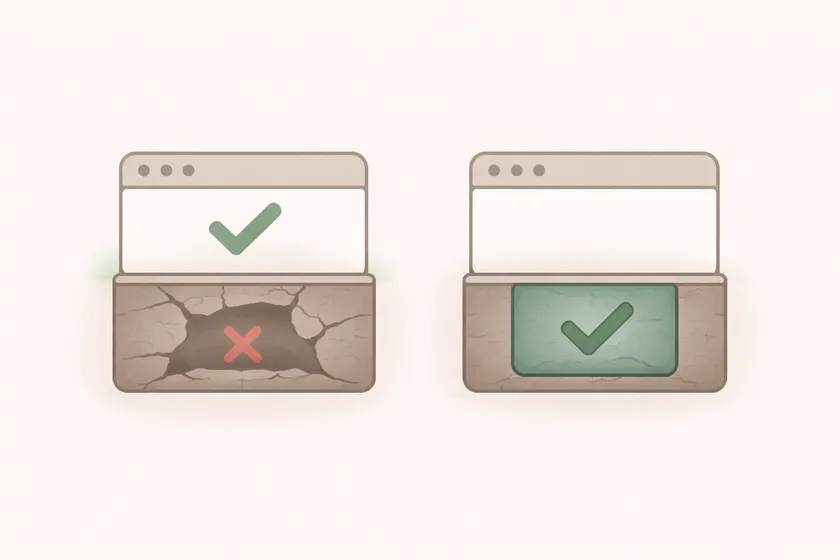

That is the difference between a test that runs and a test that protects you. A good test does not just confirm that the UI moved. It proves the behavior that matters actually happened.

The test that lied to us

The original version of the test looked fine in review:

@Testpublic void submitsPolicy() { checkoutPage.fillRequiredFields(); checkoutPage.clickSubmit(); assertEquals("Saved successfully", checkoutPage.getBannerText());}The problem is not that the assertion is technically wrong. The problem is that it is too shallow for the risk. A transient UI banner is a poor proxy for a workflow whose real purpose is “did we create a policy record with the expected data?”

The stronger version checked the real outcome:

@Testpublic void createsPolicyRecordAfterSubmission() { checkoutPage.fillRequiredFields(); checkoutPage.clickSubmit();

assertTrue(policyApi.policyExists(testPolicyNumber)); assertEquals("ACTIVE", policyApi.getStatus(testPolicyNumber));}Same flow. Completely different confidence level.

That distinction matters more as your suite grows. On a 20-test demo project, a weak assertion is an annoyance. On a 600-test enterprise suite that gates releases, weak assertions create false confidence at scale. That is worse than no test, because at least no test does not pretend to protect you.

1. A good test verifies behavior, not motion

The fastest way to spot a weak test is to ask: “If this passes, what do I actually know now?”

If the answer is only “the button was clickable” or “the page changed,” the test is probably not deep enough.

Prefer outcome assertions over UI ceremony

UI steps are usually how you reach the behavior. They are not always the best place to verify it.

For example:

- Clicking “Place Order” is an action

- Seeing a spinner disappear is a UI event

- Seeing a toast is a hint

- Verifying an order record exists is the behavior

That does not mean every UI test needs a database assertion. It means your assertion should line up with the real contract of the feature. If the feature’s real value is “user can log in,” then a title change is weaker than checking for an authenticated-only element. If the feature’s value is “user saved a setting,” then a green toast is weaker than checking the setting persisted on reload.

This is one reason I push teams toward stronger locator and assertion patterns in general. If your assertion is hanging off a brittle selector or a vague text check, you are already lowering the ceiling on test quality. Text-based locators that match how users think help because they make the test clearer, but the real win is that they encourage you to assert on visible behavior instead of DOM trivia.

Ask what could still be broken if this passes

This one question catches a surprising amount of bad test design.

If your login test passes, could the account still be locked? Could the user still be unauthenticated on the backend? Could the redirect still be wrong? If yes, the test is probably asserting on the wrong thing.

I use this rule constantly when reviewing AI-generated tests too. AI is good at producing activity. It is much weaker at choosing assertions that actually prove the business behavior. That is exactly the pattern I called out in my breakdown of AI-generated test suites.

2. A good test has one clear reason to fail

Another common failure mode is the “everything test” that tries to validate half a workflow at once. Those tests feel efficient when you write them. They become miserable when they fail.

@Testpublic void updatesProfile() { profilePage.updateEmail("new@example.com"); profilePage.updatePhone("555-1111"); profilePage.updateAddress("1 King Street");

assertEquals("new@example.com", profilePage.getEmail()); assertEquals("555-1111", profilePage.getPhone()); assertEquals("1 King Street", profilePage.getAddress());}If this fails, what broke? Email? Address validation? Save timing? A stale page object? You do not know yet. The test name says one thing, the body verifies three concepts, and the failure sends you into triage mode immediately.

One concept per test beats one click-path per test

I do not mean you can only have one assertion. I mean all assertions should support the same behavioral claim.

Good:

- “should reject invalid postal code”

- “should persist updated phone number”

- “should display rate limit message after five failed attempts”

Bad:

- “updates profile successfully”

That vague style is how teams end up with bloated tests that mix validation, formatting, navigation, and persistence into a single blob. The test becomes harder to debug and easier to ignore.

This is also where naming matters more than people admit. A strong test name acts like a contract for the body. If the name says “should reject expired token” and the body spends half its time asserting sidebar layout, something is off.

3. A good test controls its own state

Bad tests often fail for reasons outside the behavior they are meant to verify. Shared users, reused records, leftover browser state, and hidden ordering dependencies are the usual suspects.

At a retail project, we had a PR suite that passed reliably on a developer laptop and failed intermittently in CI. The culprit was not the app. It was a set of tests quietly sharing the same customer account and stepping on each other’s state whenever the suite ran in parallel.

Test data must be isolated

If two tests mutate the same entity, you do not have two tests. You have one slow race condition.

The fix is usually boring and absolutely worth it:

- unique users per test

- unique order numbers per run

- deterministic setup through APIs or fixtures

- cleanup that is scoped to the record the test created

This is the same mindset behind thread-safe parallel execution. Parallel bugs are rarely magic. They are usually shared state that the framework let you get away with until the suite grew large enough to punish you.

Avoid hidden prerequisites

The test should not depend on another test having created a user, primed a cache, or navigated the browser to the right place. If it does, the suite may still go green for a while, but you have built a trap for whoever refactors execution order later.

That is why I dislike test code that reads like a screenplay with invisible setup in @BeforeClass blocks and mutable globals everywhere. It is convenient for the author and expensive for everyone else.

4. A good test fails in a way that helps you debug

The test is not done when it can go green. It is done when the failure output is useful.

The best teams I have worked with treat failure ergonomics as part of test design:

- descriptive test names

- good screenshots

- meaningful assertion messages

- logs that show the last important action

- reports that make the failure easy to route to the right team

Write assertions that explain the gap

Compare these:

assertTrue(dashboardPage.isVisible());assertTrue( dashboardPage.isVisible(), "Expected authenticated dashboard after login, but user remained on non-authenticated state");The second version is not dramatic, but at scale it matters. When your nightly suite throws 30 failures into Slack at 7 AM, every bit of clarity reduces triage time. Good failure messages compound the same way bad tests do.

If your UI suite is flaky because of timing, the same principle applies. A failure that says “element not found” is less helpful than a failure tied to the real missed condition. That is part of why teams migrating to Playwright need to understand the async traps that create fake flakiness. Better waiting and better assertions are the same quality conversation.

5. A good test matches the risk of the feature

Not every test needs a full end-to-end verification chain. Some features are low risk and can tolerate lighter checks. Others absolutely cannot.

If the workflow affects money, permissions, policy state, customer communications, or anything compliance-related, I want deeper verification. That might mean:

- checking the API response directly

- verifying persisted state

- validating the downstream event fired

- confirming the user can re-open and see the saved result

For low-risk cosmetic behavior, I am fine with shallower checks. The mistake is using the same lightweight assertion style for high-risk workflows just because it is faster to write.

This is the part junior engineers usually learn only after the first painful miss. A test is not “good” in the abstract. It is good relative to the cost of being wrong.

What I’d do differently when teaching this

If I were onboarding a new SDET today, I would not start with unit test syntax or framework annotations. I would start with examples of false confidence:

- tests that passed while the backend failed

- tests that failed because of shared data

- tests that technically asserted something but proved nothing useful

Once people see that pattern, the rest makes more sense. Good tests are not about elegance. They are about trust. The build is only useful if the team believes green means something real.

The 5-Question PR Review Checklist

Your next step

Open one test in your suite that almost never fails. Ask three questions:

- What business behavior is this really supposed to prove?

- What could still be broken if this test passes?

- If it failed tomorrow, would the failure output tell me where to look?

If the answers are weak, rewrite that single test this week. Do not start with fifty. One high-risk test rewritten well will teach your team more than another generic “best practices” checklist ever will.