Shift-Left Is a Lie If Only QA Shifted

Table of Contents

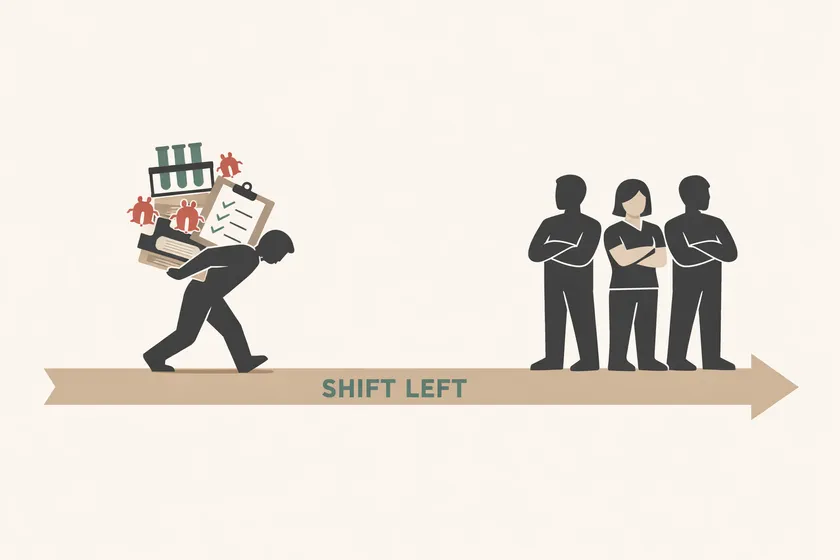

Every team I’ve consulted with in the last three years claims to do shift-left testing. Most of them are lying — not intentionally, but structurally. They shifted QA’s workload earlier in the cycle without shifting developer accountability to match. The result isn’t earlier bug detection. It’s QA doing more work, sooner, with less support.

The Promise vs. the Pattern

Shift-left is one of the most repeated ideas in modern QA strategy. It shows up in job postings, conference talks, consulting decks, and every engineering manager’s vocabulary. The core idea is sound: move testing activities earlier in the software development lifecycle so you catch defects when they’re cheapest to fix. Nobody argues with that.

But here’s what actually happens. Leadership announces a shift-left initiative. QA starts attending sprint planning. SDETs begin writing tests earlier in the cycle. Maybe the team sets up CI pipelines that run tests on every PR. On paper, testing shifted left. In practice, the only thing that shifted was who does more work and when. Developers continue designing features without considering testability. They merge PRs that break existing tests and file tickets for QA to fix them. They skip test review in code reviews. The accountability structure — who owns test quality, who fixes what breaks, who considers testability during design — hasn’t moved an inch.

When shift-left testing accountability falls entirely on QA, you get a predictable outcome: QA burns out maintaining a growing test suite they didn’t break, test failures erode trust because nobody investigates them, and six months later leadership concludes “shift-left doesn’t work for us.” It worked fine. You just never actually did it.

| What teams think shift-left means | What shift-left actually requires |

|---|---|

| QA writes tests earlier in the sprint | Devs and QA co-own test creation and maintenance |

| QA attends sprint planning | QA input changes acceptance criteria and design |

| QA files bugs sooner | Testability is a design requirement, not an afterthought |

| Tests run in CI before merge | Test failures block the PR author, not just QA |

Here are four symptoms I’ve seen repeatedly that tell you accountability never shifted.

1. “Tests Broke? Tell QA.”

A developer merges a PR that refactors a checkout flow. The component hierarchy changes, two API contracts shift, and the test selectors that pointed at the old structure now point at nothing. Eight tests fail in the next CI run. The developer’s response? File a JIRA ticket: “Tests need updating — assigning to QA.”

At a large financial services client, I watched this play out on a 12-person team with 6 developers and 2 SDETs. The SDETs were responsible for maintaining test coverage across all 3 squads’ PRs. Every sprint, developers would merge UI changes and API updates, and the SDETs would inherit a backlog of broken tests they didn’t cause. The tests were never “theirs” in the developers’ minds — they were QA artifacts, like documentation someone else maintains.

This is the most fundamental symptom. If a developer can break a test and walk away without consequence, the test is not part of the development process. It’s a downstream service request.

What shared ownership looks like: the PR that breaks a test doesn’t merge until the same developer fixes the test. Test failures in CI block the PR author, not a separate team. If the developer doesn’t know how to fix the test, they pair with an SDET — but the responsibility stays with the person who introduced the change.

2. “We Don’t Have data-testids — That’s a QA Ask.”

Testability isn’t free. It requires engineering investment: stable test selectors like data-testid attributes, well-documented API contracts, feature flags for test isolation, deterministic test data seeding. These are engineering deliverables, not QA nice-to-haves.

But on teams where shift-left is a QA-only mandate, testability requests get treated like enhancement tickets from a downstream consumer. I’ve watched data-testid requests sit in the backlog for 4 months at one client because product wouldn’t prioritize them and engineering saw them as “QA asks.” Meanwhile, the automation team wrote increasingly fragile CSS selectors that broke every time the frontend team updated a component library. Those fragile selectors are exactly how you end up with a green test suite that hides real bugs — tests that pass because they’re asserting on the wrong elements, not because the application works.

What shared ownership looks like: testability is reviewed during design, the same way performance or security is. When a developer designs a new component, they include stable selectors. When a backend team designs an API, they publish a contract that test infrastructure can rely on. These aren’t QA requests — they’re engineering requirements.

3. “QA Should’ve Caught That.”

A bug ships to production. The incident post-mortem happens. Someone asks the question: “Why didn’t our automated tests catch this?” And the room looks at QA.

But here’s what actually happened: the feature was designed without test hooks. The PR was merged without anyone reviewing whether the existing tests still covered the changed behavior. The test was written after the fact, against a UI that changes every sprint, using selectors that were already one redesign away from breaking. QA wrote the best test they could with the hooks they were given — which is to say, almost none.

I’ve seen this post-mortem pattern at three different enterprise clients. The conversation always focuses on “why didn’t QA catch it?” and never on “why was this feature untestable?” The difference matters. The first question assigns blame to the people furthest from the design decision. The second question traces the problem to its root — a design process that didn’t consider testing as a constraint.

This is the same dynamic that produces flaky tests that get blamed on “flakiness” instead of investigated. The test is telling you something. Whether you listen depends on who you hold accountable.

What shared ownership looks like: post-mortems ask “why was this untestable?” as a standard question. If a feature ships without test coverage, the root cause analysis traces back to the design phase — not the QA phase.

4. “We Added QA to Sprint Planning — That’s Shift-Left, Right?”

This is the most common and most insidious symptom. QA now attends sprint planning, backlog refinement, and design reviews. On the surface, that’s shift-left. QA is involved earlier. Box checked.

But attendance without influence is performative. If the QA lead raises a testability concern during refinement — “this design doesn’t have any way to verify state transitions without going through the full UI” — and the ticket ships unchanged, QA’s presence in the meeting was cosmetic. They attended. They spoke. Nothing changed.

At one enterprise client, the QA backlog grew from roughly 15 test-update tickets to over 40 in the span of 2 sprints after the team “shifted left.” The SDETs were now attending more meetings, providing input earlier, and inheriting even more maintenance work because their input didn’t actually shape the acceptance criteria or the definition of done. They had more visibility into what was coming, but zero authority to change how it was built.

What shared ownership looks like: QA input shapes what gets built. Testability considerations appear in the ticket before development starts. The definition of done includes “tests updated or created by the PR author.” QA doesn’t just attend the meeting — their feedback changes the output.

Where Shift-Left Does Work

I don’t want this to read as a case against shift-left. The concept is sound, and I’ve seen it work — genuinely work — on teams that did it properly.

The teams where shift-left delivers on its promise share specific traits. Developers run the test suite locally before opening a PR and treat failures as their problem. Testability is a first-class design concern reviewed alongside performance and security. When a test breaks, the person who caused the breakage investigates it, even if that means pairing with an SDET to understand the test framework. Test failures in CI block the PR author’s merge, not just the QA dashboard.

The difference between these teams and the ones I described above isn’t the ceremonies or the tooling. It’s the accountability structure. The work shifted and the ownership shifted.

The Real Shift

Shift-left was never supposed to mean “QA starts testing earlier.” It was supposed to mean “the whole team owns quality earlier.” Somewhere along the way, most organizations kept the first interpretation and discarded the second.

If you want to know whether your team has actually shifted left, ignore the ceremonies and the tooling. Ask one question: who fixes the tests when a developer’s PR breaks them? If the answer is always QA, you haven’t shifted left. You’ve shifted QA’s workload left and kept everyone else exactly where they were.

If you’re a QA lead reading this and nodding along — this post was written for both you and your engineering manager. Forward it. The conversation about shared ownership starts with naming the gap.

Frequently Asked Questions

What does shift-left mean in testing?

Shift-left means moving testing activities earlier in the software development lifecycle — from after-the-fact verification to in-process quality engineering. But it requires structural change, not just earlier QA involvement. If only the QA team’s schedule shifted and developer workflows stayed the same, the initiative missed its point.

How do you measure if shift-left is working?

Look at three things: who owns test maintenance (is it shared or QA-only?), whether testability appears in design reviews as a real constraint, and whether test failures in CI block the PR author or just generate tickets for QA. If all three point to shared ownership, your shift-left is working. If they all point to QA, it isn’t.

Who is responsible for test maintenance in shift-left?

In a properly implemented shift-left model, the developer who introduces a change that breaks a test is responsible for fixing that test — or pairing with an SDET to fix it. QA engineers own test strategy, framework architecture, and coverage planning. The day-to-day test maintenance is a shared responsibility tied to whoever introduced the breaking change.