Claude Code Has 2 Primitives, Not 3 — Use Skills First

Table of Contents

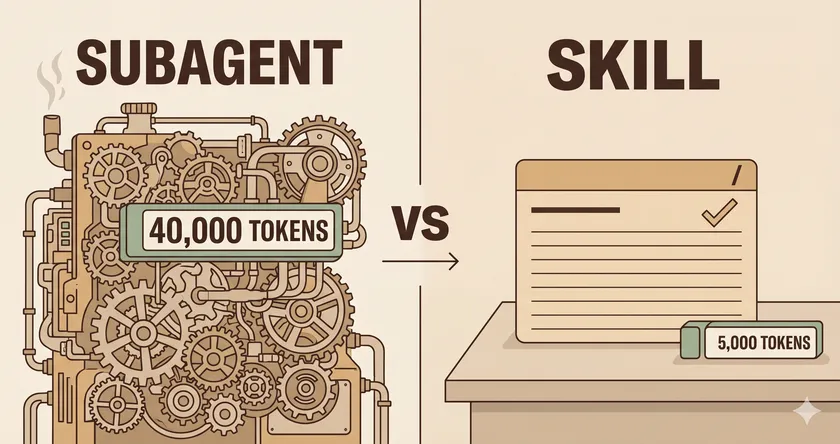

The most expensive mistake I see in Claude Code setups is building a subagent where a skill would do. The token cost runs an order of magnitude higher. The latency is roughly 12× worse. And half the time, the “subagent” is just a recipe pretending to be autonomous. Here’s the part most people miss: as of Claude Code v2.1.3, there are only two extensibility primitives, not three.

Anthropic quietly merged slash commands into skills in late 2025. A file at .claude/commands/deploy.md and a skill at .claude/skills/deploy/SKILL.md now both create the same /deploy command and behave identically. Boris Cherny from the Claude Code team said it plainly on X: “Skills and slash commands are the same thing.” The official docs reflect this, but the community has been slow to catch up — every “Claude Code 101” post still treats them as three separate primitives. If you’re making architecture decisions based on that mental model, you’re solving the wrong problem.

After consulting with enterprise QA teams wiring Claude Code into their test pipelines, I see the same pattern: engineers reach for subagents because “agent” sounds more capable. What they actually need, nine times out of ten, is a skill.

What Actually Changed: Slash Commands Are Just Skills

Before v2.1.3, the community treated three primitives as distinct: subagents (.claude/agents/*.md), slash commands (.claude/commands/*.md), and skills (.claude/skills/<name>/SKILL.md). The merger collapsed commands into skills, and Anthropic kept the old directory for backward compatibility. The practical effect:

- Any file in

.claude/commands/works, but the recommended path is now.claude/skills/ - The frontmatter schema is unified —

name,description,allowed-tools,argument-hint,disable-model-invocation,user-invocableall work the same way - The

/my-skillshortcut still fires when you type it, but the model can also auto-invoke the skill if the description matches - If both a command and a skill exist with the same name, the skill wins

That leaves two primitives with a real semantic difference: skills (procedural knowledge the model loads on demand) and subagents (isolated execution contexts the model dispatches to). The rest is surface area.

What’s the Actual Difference Between a Skill and a Subagent?

A skill is instructions plus bundled files that the model reads into its own context when a description matches. A subagent is a whole new Claude session with its own context window, its own tool access, and no memory of the parent conversation — it reports back a summary and nothing else.

Three concrete differences drive every decision:

Context: shared vs isolated

Skills run in your current context. Their metadata is always loaded into the system prompt, and the full body loads only when triggered — a progressive disclosure model that keeps the token footprint small. Subagents run in a fresh isolated context — everything they need must be passed in at invocation time, and everything they produce gets distilled into a single return message.

Invocation: who decides

Skills are model-invoked by default (Claude picks them up when the description matches your task) but can also be user-invoked via /skill-name. Subagents are dispatched through the Agent tool (renamed from Task in v2.1.63) — Claude decides to hand off work when it matches the subagent’s description, or you can force it with @agent-name.

Cost: orders of magnitude different

This is where most teams get hurt. A subagent invocation carries the full cold-start cost of a new context plus the per-turn cost of its own tool calls. Users have measured 15× to 75× token overhead on simple tasks — one reported 153k subagent tokens against 2-3k for the equivalent main-thread task. Anthropic’s own research on multi-agent systems reports around 15× the token usage of single-turn chats. Martin Garramon’s published benchmark puts wall-clock time at 10 seconds per skill invocation vs 2 minutes per subagent invocation for the same task.

15-75×

Subagent token overhead

~10s

Skill invocation latency

~2min

Subagent invocation latency

Why Do Claude Code Subagents Burn So Many Tokens?

Because subagents start from zero. Every time you dispatch one, it rebuilds context by reading files, running searches, and asking follow-up tool calls — work the main thread has usually already done. The “isolation” that makes them safe also makes them expensive.

Here’s the pattern I see on enterprise test teams. Someone builds a subagent called flaky-test-investigator — the idea is noble: when a Playwright test fails, the agent reads the trace, classifies the failure (stale selector, race condition, network flake), and suggests a fix. On paper it sounds like a good subagent — multi-step, requires judgment, runs in the background.

In practice, every invocation does the same dance:

---name: flaky-test-investigatordescription: Investigate flaky Playwright test failures and suggest fixestools: Read, Grep, Bash---

You are a test failure investigator. Read the trace file, classifythe failure type, and suggest a fix. Look for stale selectors,race conditions, network flakes, and timing issues.Each CI run, the agent reads the trace file (20k tokens), runs Grep across the test directory (10k tokens), opens three or four source files to verify hypotheses (10-50k tokens), and returns a one-paragraph diagnosis. 40k-80k tokens per invocation, two minutes of wall-clock time, and the output is the same 80% of the time: “stale selector, update the data-testid.”

That’s not autonomous investigation. That’s pattern matching dressed up as reasoning. The reference material is small and static. The decision tree is shallow. The output is constrained. It’s a skill wearing an agent costume.

When Should You Actually Reach for a Subagent?

Reach for a subagent when you need isolated context, parallelism, or adversarial review. Everything else is a skill.

Three workflows where subagents genuinely earn their cost:

- Parallel research across independent questions — dispatching multiple subagents to check unrelated things at once is faster than serializing them in the main thread.

- Context protection on large result sets — if a tool call will return a 50k-token blob that you only need to summarize, do it in a subagent so the blob never hits your main context.

- Adversarial review — a “code reviewer” or “advisor” subagent that hasn’t seen your chain of thought can catch errors you’d rationalize past. This one I use heavily.

Everything else — bundled instructions, decision trees, procedural workflows, code scaffolding — is a skill. If you’re unsure, default to a skill and upgrade only when you hit a concrete limit. The same logic shows up in the test automation work I described in why AI-generated test suites still need human review — when the decision tree is shallow, “AI autonomy” is just a more expensive version of a checklist.

The pattern works in test automation contexts the same way it works in the Copilot context-starvation problem: deterministic procedures belong in files the model loads on demand, not in autonomous workers that rebuild context every run.

Why Don’t My Claude Code Skills Auto-Trigger?

Because the description field is the entire trigger mechanism, and most people write it as a summary instead of a description of when to use the skill. Skills don’t auto-activate on keyword matches — they activate when the model reads the description and decides “this situation matches.”

This is a real, documented problem. There’s even a GitHub issue where Claude itself admits: “The system is set up correctly. The trigger conditions are clear. I just… didn’t do it.” The fix is always the same — rewrite the description.

How Do I Write a Skill Description the Model Will Actually Invoke?

Three rules, earned the hard way:

- Lead with “Use when…” — it primes the model to evaluate fit against the current task. Anything else reads like a label.

- List concrete symptoms or keywords — specific error messages, file paths, or artifacts that appear when this skill should fire. Vague descriptions miss; concrete ones catch.

- Keep combined

description+when_to_useunder 1,000 tokens — they’re truncated at 1,536 chars in the skill listing, and anything cut off isn’t part of the trigger.

A skill that replaces the flaky-test subagent above:

---name: flaky-test-classifierdescription: | Use when a Playwright or Selenium test fails intermittently or shows trace symptoms of flakiness: stale selectors, TimeoutError, detached element handles, network timeouts, or race conditions between DOM updates and assertions.allowed-tools: Read, Grep---

Classify the failure by matching the trace output to one of fivepatterns (see references/patterns.md) and emit a diagnosis withthe specific fix...Same accuracy on classification, 5k tokens instead of 40k, and it runs in the user’s current context without a cold start. The model triggers it reliably because the description maps directly to what the user is actually looking at: a failing trace file.

If you’re building Claude Code workflows into a flaky-test investigation pipeline, the same principle applies to the test strategy itself — see how I handle race conditions in real suites for the non-AI side of that work.

The One-Line Rule

Next time you feel the urge to reach for a subagent, ask yourself: does this need isolated context, parallelism, or an adversarial second opinion? If the answer is no, it’s a skill. Write the description as a “use when…” trigger, bundle any reference material as separate files, and let progressive disclosure keep your context window clean.

The subagents you keep should be the ones that genuinely need isolation. Everything else is overhead pretending to be sophistication.

Are slash commands and skills the same thing in Claude Code in 2026?

Yes. Since Claude Code v2.1.1, commands have been merged into skills. A file at .claude/commands/deploy.md and .claude/skills/deploy/SKILL.md both create /deploy and behave identically. The .claude/skills/ path is the recommended location going forward — the commands/ path still works for backward compatibility.

Can a subagent invoke a skill?

Yes, two ways. A subagent can declare skills: in its frontmatter to preload them at startup, and a skill can run its own body inside a subagent via the context: fork and agent: fields. Skills and subagents compose rather than compete.

Why do my Claude Code skills not auto-activate?

Because the description field doesn’t describe the trigger condition clearly. Skills activate when the model matches the description to the current task — not on keyword matches. Rewrite the description starting with “Use when…” and list concrete symptoms, error messages, or file types that should fire the skill.

When is a subagent actually worth the token cost?

Three cases: parallel research across independent questions (multiple subagents racing in parallel beats serial main-thread work), context protection on large result sets (a 50k-token blob you only need to summarize should never hit your main context), and adversarial review (a subagent that hasn’t seen your chain of thought catches errors you’d rationalize past). Everything else is a skill.

Where should custom skills live — project or user directory?

Project skills live in .claude/skills/ and are checked into git for team sharing. Personal skills live in ~/.claude/skills/ and stay on your machine. Enterprise-managed skills override both. Start at the project level if the skill encodes team conventions — that’s where the leverage is.